Maximizing PHP 7 Performance with NGINX, Part II: Multiple Servers and Load Balancing

PHP is the programming language used for many popular frameworks and content management systems (CMSes). We have specific articles on the two most popular PHP-based CMSes, WordPress and Drupal.

Introduction: When to Use Multiple Servers

Part I of this blog post covers maximizing PHP web server performance //link// on a single-server implementation, where the Web server and the PHP application share a single server or virtual machine instance. It also covers caching on NGINX, which can be implemented in a single-server or multi-server environment.

As we described in Part I, for a single-server system, moving to PHP 7 and moving from Apache to NGINX both help maximize performance. Static file caching and micro-caching maximize performance on either a single-server setup or a multi-server setup, as described here. As a very rough estimate, single-server optimizations might achieve single-digit multiples of performance – double, quadruple, perhaps even an order of magnitude improvement – on a single server, preventing it from bogging down as traffic loads increase.

But you may need more growth than this; you may also need a solution that doesn’t require you to change your existing setup. If either of these descriptions applies to you, you have to suck it up and move to a multi-server implementation. Unlike single-server optimizations, you aren’t so concerned with specific changes in configuration and implementation of your existing setup; instead, you’re thinking big, treating a single application server as a single dynamo in a power plant that can scale up to serve needs at a global level. The work is more imaginative, and as much about design as about the details of implementation.

Moving to a multi-server setup is also a good time to consider NGINX Plus, rather than open source NGINX software. Here are some of the salient characteristics of each:

- Open source NGINX. Community support; wide use; open source and pre-built distributions; well understood by sysadmins (though not universally so); no license fee.

- NGINX Plus. All of the advantages of open source NGINX, plus (ha ha) pre-configured distributions; professional support; access to NGINX engineers; advanced load balancing, for HTTP and TCP; session persistence; application health checks, media delivery features; live activity monitoring; license fee, either per instance or volume/custom pricing.

The feature advantages of NGINX Plus are certainly desirable for a single-server setup, but they’re nearly indispensable for multiple servers:

- Professional support. Available only with NGINX Plus, professional support becomes much more important with a more complex setup and the large user base you’re supporting.

- NGINX consulting help. Consulting help is available with an NGINX Plus subscription. It can be used with either version of the software, but is more effective when applied against the stable distributions and more advanced features of NGINX Plus.

- Pre-compiled software distributions. Ready-to-use distributions enable support, reduce problems, and give you fewer places that you have to look when a problem does arise.

- Additional load balancing methods and session persistence. These features are likely to be indispensable to effective operation of a large, busy site.

- Monitoring and management. These system administration features of NGINX Plus are clearly vital in a multi-server setup which may have tens of thousands, hundreds of thousands, or millions of users.

- Health checks. Built-in active querying of servers identifies problems and warns you of hacker attacks before they affect users, giving you the opportunity to seamlessly prevent crashes, lost user sessions, and downtime.

To this last point, the biggest and most efficient sites are taking fairly radical measures to pre-emptively prevent downtime. Netflix purposefully unleashes bots that randomly knock down their own site’s servers, singly or in combination, just to test the self-healing capabilities of their site architecture. That’s the kind of resilience and robustness that your site should be heading toward as well.

The time savings from pre-compiled distributions, professional support, and additional features free site development and support staff up to tackle higher-level problems and to plan for growth.

Here in Part II, we will describe steps you can take to implement big changes in performance, up to an order of magnitude or more in throughput, by moving to a multi-server implementation.

Step 1: Decide on Your Upgrade Strategy

Your first step is to decide what steps you’re going to take, in what order, to improve site performance. Though it might seem counterintuitive, upgrading your existing single-server setup and moving to a multi-server implementation are separate, independent decisions – and the steps within each group of changes are largely independent decisions as well:

- There’s a strong drive throughout computing to work with the “latest and greatest” – whether that means the version of PHP you use, the web server you choose to support your application, high-performance caching implementations, or all of the above. That drive for optimization argues for implementing the steps in Part I first. All of them.

- However, it’s often simply not worth the time and effort to rewrite, test, and (re-)deploy applications, web server configuration files for NGINX PHP configuration, important modules, and so on. In this case, you can move to a multi-server implementation, with little or no change to your existing single-server setup. If you need multiple application servers, you just clone your current single-server setup, as described here in Part II, until you get around to optimizing it – if ever.

Now if you’re starting with a new, “greenfield” app, you should simply use the single-server and caching optimizations in Part I, and the multi-server and load balancing techniques described here in Part II, as one big holistic To Do list. But if you’re revising an existing app, and especially if you’re doing so under performance pressure – increasing traffic, with limited time, money, and staffing available – you can choose techniques a la carte, picking from single-server and multi-server implementation techniques to stay one step ahead of ever-increasing performance and feature requirements.

We can’t tell you exactly how to make those choices; each situation is sui generis (Latin for unique). You can get a pretty good idea by carefully considering each of the optimizations described here and comparing them to the particular performance challenges faced by your site, and the knowledge, time, and resources you have available to meet them with. You may also decide that it’s worthwhile to get help in making and implementing these decisions. If so, As mentioned above, NGINX offers consulting packages that may represent a good time vs. money tradeoff in the short term, while transferring valuable frameworks, knowledge, and technical skills for use in the longer term.

Use the steps in Part I and below in the order listed, or in the order that best meets your needs.

Step 2. Choose a Reverse Proxy Server

A reverse proxy server is a server that receives requests from web clients – that is, browsers, such as Safari or Chrome – and then forwards them to one or more application servers, such as NGINX PHP servers or Apache PHP servers, or other servers, for processing. The reverse proxy server receives responses from the other servers, and sends them back to the client. (We have a more detailed definition of reverse proxy server here on the NGINX site.)

Clients only “see” the reverse proxy server; to them, the reverse proxy server is the site. However, all kinds of magic can happen behind the scenes. Using a reverse proxy server is simply another example of one of the most famous sayings in computer, from David Wheeler: “All problems in computer science can be solved by another level of indirection.”

Why use a reverse proxy server? There are two main reasons:

- Performance. By adding a single reverse proxy server to a single application server, you usually gain performance. You do add an additional lag while requests and responses flow from the reverse proxy server to the application server, but these communications have very low latency, as the two machines are usually adjacent to each other on a LAN. At the same time, the application server runs so much faster at peak times, because it’s so much less likely to get overloaded, that site performance markedly improves.

- Scalability. With a reverse proxy server, you can add multiple application servers, offload application servers by moving caching to the reverse proxy server, more easily integrate to storage arrays or content delivery networks, and much more. Your site can become almost arbitrarily scalable, with predictable performance as you scale.

There are additional benefits. Security improves because you can focus your security efforts on a single machine that only handles Internet traffic. Also, NGINX has a number of security advantages, whether you implement it as a reverse proxy server, as a replacement for Apache on the application server, or both. Uptime is likely to improve – even beyond the benefits to uptime gained through enhanced security – because you can add or replace servers flexibly as needed, and because you can duplicate any resource and make it “hot-swappable” without downtime. You can even do this with the reverse proxy server itself.

There is a cost, of course, to using a second server, or additional servers beyond that. However, the cost of physical servers or cloud instance access is often dwarfed by the cost of time to try to optimize and manage single-server setups that are inadequate to performance demands. There are also opportunity costs and reputational costs to slow performance or traffic – that is, customer requests, attempted transactions, and so on – falling on the floor while hardware costs are minimized by the use of a single server. Most organizations today recognize software and personnel costs, not hardware or per-instance costs, as the gating factor to success.

Even less immediately, you can use NGINX as a tool across a number of – wait for it – server modalities. That is, you can use NGINX to interface with, and as your web server for, internally controlled servers, private cloud instances (on different private clouds), public cloud instances (on different public clouds), and any mix you desire. A nimble NGINX sysadmin can easily tweak their implementation to look and work quite differently as needs change; lock-in concerns diminish.

Step 3. Implement Your Reverse Proxy Server

A big advantage of using a reverse proxy server is that you don’t have to change anything at all on your application server – including the web server configuration, whether that’s Apache or NGINX, or the PHP version. The web server tied to the application server is rather spectacularly ignorant of the fact that it’s no longer receiving traffic directly from the Internet, but it performs much faster as a result.

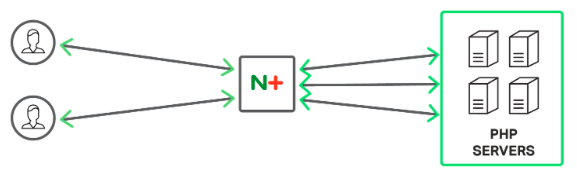

NGINX Plus is a reverse proxy and load balancer for your PHP backend

You can proxy requests to an HTTP server, such as another NGINX server, or a non-HTTP server, such as a server running PHP. FastCGI, uwsgi, SCGI, and memcached are some of the most widely used supported protocols.

Key to NGINX configuration is the listen directive, which you specify inside a location block.

upstream backend {

server php-app1.example.com;

server php-app2.example.com;

}

server {

listen 80;

server_name www.example.com;

# enforce https

return 301 https://$server_name$request_uri;

}

server {

listen 443 ssl;

server_name www.example.com;

location /some/path/ {

proxy_pass http://backend;

}

If you have experience with Apache, NGINX server configuration is difficult only because it’s new. People experienced with server configuration tend to describe NGINX configuration as fast, easy, and sensible – once you learn it, but there’s a learning curve for anything you haven’t done before.

Here on the NGINX website you will find detailed descriptions for configuring NGINX (open source and Plus) and specific configuration for NGINX Plus. You can also look up “PHP NGINX configuration” or similar search terms to find a useful set of third-party resources for getting started. And, you can visit the NGINX community and, for NGINX Plus customers, NGINX Plus support.

Step 4. Rewrite URLs

On any website, it’s desirable to rewrite URLs, so you can achieve several goals:

- Shorter. Shorter URLs are easier to read, which makes development easier as well as using the site. Shortening URLs also prevents them from exceeding length limits on entry fields, overflowing buffers, etc.

- Readable. A URL that the user can easily read and understand is reassuring to the user, and easier to share, though some sites don’t make the effort.

- Predictable. Users build a mental model of your site and expect that to be reflected in the URL, with site sections appearing as subdirectories within the URL and sensible filenames for web pages and assets such as PDF files.

- Stable. You can change your site architecture or the location of assets on your site without breaking existing hyperlinks to individual webpages and site assets.

In Apache, you rewrite URLs using the mod_rewrite module in the .htaccess (hypertext access) file, a configuration file that operates at the directory level, with lower-level files both augmenting and overruling higher-level files – an attribute that’s both powerful and confusing. Many Apache users simply inherit existing configurations from other server setups, and making changes is a matter of luck as well as skill.

In NGINX, you use the rewrite directive instead. The rewrite directive is simpler, more powerful, and easier to maintain than the Apache approach, but – like anything new – it has a (short) learning curve. See this blog post on creating new rewrite rules in NGINX and this one for converting Apache rewrite rules to NGINX.

Following is an example of an NGINX rewrite rule using the rewrite directive. This rule matches URLs that begin with the string /download and that also include the directories /media/ or /audio/ later in the path.

The rule replaces those path elements with /mp3/ and adds the appropriate file extension, .mp3 or .ra. The $1 and $2 variables capture the path elements that aren’t changing. As an example, /download/cdn-west/media/file1 becomes /download/cdn-west/mp3/file1.mp3.

server {

...

rewrite ^(/download/.*)/media/(.*)..*$ $1/mp3/$2.mp3 last;

rewrite ^(/download/.*)/audio/(.*)..*$ $1/mp3/$2.ra last;

return 403;

...

}

Step 5: Implement Load Balancing and Session Persistence

Websites are scalable in two dimensions; vertical scalability means getting more out of one server, whether by software changes (as described in Part I) or by buying a faster box or server instance. Horizontal scalability means adding boxes – most importantly, adding multiple application servers.

Implementing a reverse proxy server, as described in Step 3 above, allows you to use multiple application servers, giving you horizontal scalability: to get more performance, just add more servers. You can also centralize storage requests (using a server pool or content distribution network) and terminate SSL/TLS and HTTP/2 at the reverse proxy server. With the right software tools, such as those found in NGINX Plus, adding and removing servers can be done with no downtime at all.

Implementing a reverse proxy server, as described in Step 3 above, allows you to use multiple application servers, giving you horizontal scalability: to get more performance, just add more servers. With the right software tools, such as those found in NGINX Plus, adding and removing servers can be done with no downtime at all.

Now as soon as you add multiple application servers, two capabilities become very important:

- Load balancing. You want each new request to go to the least busy server so it gets handled quicker. No one server becomes an impediment to the entire site. NGINX Plus has more complex load balancing algorithms than open source NGINX, and both include support for server weights. With NGINX Plus, you can also reconfigure on the fly, without downtime.

- Session persistence. If you hold state for a user on your application server, such as login or purchase data – you can also hold this live data centrally, if you’re very clever, and willing to rewrite your app – you need the same user to keep getting sent back to the same application server throughout the user’s session. Session persistence is included only in NGINX Plus.

Server weights is a feature that works with several different load-balancing methods. You can increase the weight of a server (the default weight is 1) to increase the number of requests that go to that server.

The following code shows the weight parameter on the server directive for backend1.example.com:

upstream backend {

server backend1.example.com weight=5;

server backend2.example.com;

server 192.0.0.1 backup;

}

NGINX Plus supports a few ways of doing session persistence, one easy way to do it is the “sticky learn” method. This method leverages the PHPSESSIONID cookie commonly set by PHP apps. With this method if NGINX sees this cookie being set by a server in a response, any subsequent requests with this same cookie will be sent back to that same PHP server.

upstream backend {

server webserver1;

server webserver2;

sticky learn create=$upstream_cookie_phpsessionid

lookup=$cookie_phpsessionid

zone=client_sessions:1m

timeout=1h;

}

Please note that with this method you don’t have to use PHPSESSIONID; you can key off of any cookie you like.

Bonus Section: Monitoring and Management

Once you have multiple application servers, load balancing, and session persistence in place, management and monitoring become critical. With NGINX Plus, active health checks give you pre-emptive alerts as to problems with your server. Beyond simple up and down, if a server is for example, spitting out malformed content, NGINX Plus will catch this and direct traffic away from the malformed server.

NGINX Plus has a built-in dashboard to monitor the health of your system. It has a wealth of data allowing you to monitor overall traffic flow in and out of your application, and more granular stats to see how well each individual server is performing.

The NGINX Plus stats are also exportable in JSON format. You can export this data to your choice of monitoring tool, such as Data Dog or New Relic, or into your own custom monitoring tool.

Conclusion

PHP is so easy to use, and so capable, that it’s a key driver in the profusion of websites and web applications are catalyzing the digital revolution. PHP 7, which brings much higher performance to PHP, will inspire new expectations about the speed, performance, security, and manageability of websites.

Outside simply converting your site’s code to PHP 7, the most powerful and widely used performance improvement strategies for your site all tend to include the use of NGINX. This pair of blog posts describes capabilities you can use in a single-server environment, plus caching techniques, and capabilities you can use in a multi-server environment.

It’s up to you where to start, but sites with growing traffic numbers are likely to end up in a similar place: with apps rewritten or newly written in PHP 7, running on NGINX at the server level and on a reverse proxy server, and with multiple application servers, load balancing, a content distribution network, management and monitoring – a big step in complexity and capability beyond the single-server implementations that are so easy to create and launch in PHP.

The post Maximizing PHP 7 Performance with NGINX, Part II: Multiple Servers and Load Balancing appeared first on NGINX.

Source: Maximizing PHP 7 Performance with NGINX, Part II: Multiple Servers and Load Balancing